Autonomous Vehicles

Autonomous vehicles are progressing faster than ever before, to the point where fully autonomous vehicles already exist on public roads today.

Where are we at?

Great question. According to Automotive World[1], we've already begun introducing autonomous vehicles at a steady albeit extremely slow pace. In the United Kingdom, Automated Lane Keeping Systems (ALKS) are being added to certain roads.

Take Toyota for example - who is planning on building a "City for Self-Driving Cars"[2]. Planned for construction at the base of Mount Fuji, streets are planned to be split three ways. This allows for autonomous vehicles, regular vehicles, and pedestrians to be safely separated.

Other companies, like AutoX, based in China, are also getting a big boost. The Chinese government is extremely influential in accelerating this process[3] as they can help out with legislation issues; they even have 30-mile "self-driving zones" where self driving cars can freely roam. In addition, by installing infrastructure to help autonomous vehicles using V2X ("vehicle to everything") technology, vehicles can gain situational awareness beyond what the vehicle itself can see.

There's a lot to look forward to, but at the moment there's still quite a few challenges preventing these theories and small-scale tests from becoming more mainstream.

What still needs to happen to overcome challenges?

For one, vehicle safety.

It's already known that with every level of automation, vehicles become just a little safer[4], but as with all new technologies we tend to take accidents caused by autonomous vehicles much more seriously than traditional vehicle accidents. Even though over 36,000 people were killed from motor vehicle crashes in 2019 alone (nearly 100 people everyday - and this is just in the United States!), we often dismiss these. In contrast, a single autonomous vehicle crash often makes nationwide headlines.

The other big issue is with ethics. Ethics are a big problem; do you trust a machine with a person (or even multiple) lives?

What are the ethical obstacles?

There are quite a few. Moral Machine allows anyone to see their own moral compass regarding some of the more... morbid... thought processes, but there's also plenty of mundane day-to-day questions being asked every second by a self driving car.

"How will an autonomous car overtake another car?"

"What will it do when it senses a pedestrian?"

"How do we collect enough data to drive safely?"

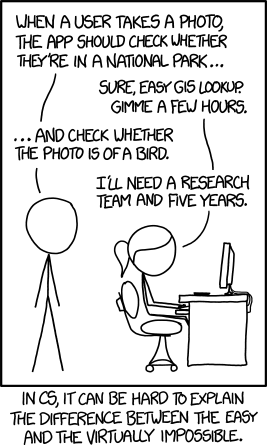

These are just some of the questions Zoox[5] had to ask when designing their fully autonomous self-driving cars, and were also certainly asked by every other autonomous car company. Computers don't "see" the same way we do - if anything, the fact that we've even achieved computer vision using lasers and sensors is quite astonishing.

The other ethical obstacle is simply public acceptance. Autonomous vehicles can be a thousand times safer than conventional vehicles, but even if they are - and still cause tens of deaths per year (in contrast to tens of thousands) - people will continue to be hesitant of them for a good while.

Remember when people protested against cars when they were first invented?

It's normal to be hesitant of emerging technology. Risks and benefits are uncertain and long term implications are hazy. That being said, while the future isn't particularly clear, we can be pretty optimistic that autonomous vehicles are coming sooner than later.

Comments

Post a Comment